Did I Break You? Reverse Dependency Verification

SoundCloud was founded 13 years ago, and throughout its history, the company and much of its tech stack has changed. We started with a monolithic Ruby on Rails app, and since then, have worked to extract, isolate, and reuse logic in many subsystems, and later on in separate microservices. We introduced different storage mechanisms and data analysis apps, and our frontend has also expanded from a single website to mobile apps, integrations with third parties, an Xbox app, and more recently, a full-fledged PWA to run on Chromebooks.

Golden Paths

Internally, we’re committed to giving teams flexibility to pick whatever tools they deem best to solve the technological challenges of everyday product development. While this means a lot of autonomy, it also gradually led to a fragmented environment of languages, frameworks, processes, and documents, making cross-team collaboration and onboardability harder. We have, thus, moved into a model of providing sensible recommendations and supporting an opinionated tech stack.

In that sense, complexity became opt-in: In general, libraries, tooling, and the engineering experience should be as simple as possible, with no configuration, but still provide the ability to support more complex use cases, depending on each problem-requirement set.

With time, we identified some similarities in the implementations of our solutions and naturally repeated choices. Engineers experienced with a given programming language were more likely to pick the same for a following project, while in other cases, the ease of integration with, say, a specific database engine pushed a new integrator to decide for it again. We built more tooling to help develop these existing apps, we wrote more documentation to cover the “gotchas” of a framework, standards arose to organize the system architecture, etc.

This cycle ended up consolidating some ideas as de facto Golden Paths for our different disciplines: web development, data science, infrastructure, etc. Engineers are still free to choose their tooling, but the positive reinforcement cycle was already set: Why spend time reinventing the wheel when lots has already been invested in building mature solutions? Drifts from the Golden Paths exist, but they’ve become increasingly rare.

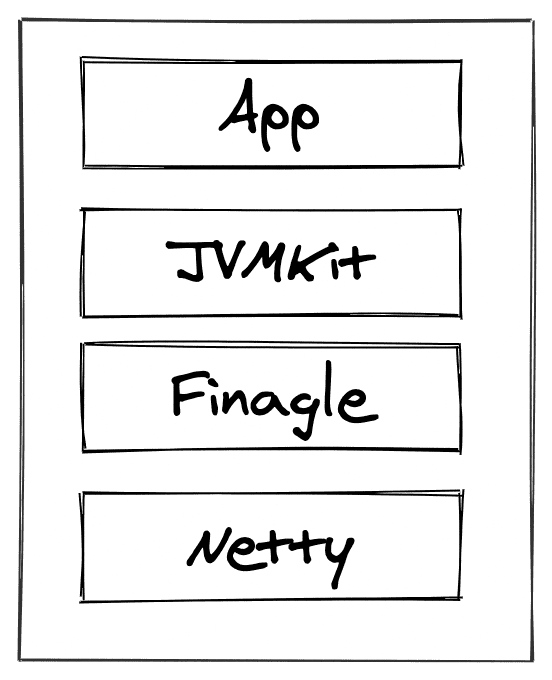

JVMKit

We settled on the Golden Path for developing backend applications in the JVM ecosystem. Since adopting Twitter’s Finagle, we’ve also built extensive tooling on top, up to a point in which we could derive a library of our own: JVMKit, which helps building JVM applications by providing an implementation of common patterns and protocols used at SoundCloud.

Because Scala applications make up the vast majority of apps at SoundCloud, JVMKit mainly targets them, but it should be possible to use from any other JVM language as well — we currently run services in Java, Clojure, and JRuby as well, even though we try to steer everybody toward the Golden Path. It provides opt-in modules for serving public traffic in the shape of BFFs, as well as integration with MySQL, Kafka, Memcached, and Prometheus, among other functionality.

With such a broad audience, it’s no surprise that JVMKit was largely adopted across SoundCloud, and it powers much of what we provide to our users — it became a critical component of our technology stack, with hundreds of services depending on it and thousands of instances running it in production.

JVMKit’s repository sees active development: New features, bug fixes, and security patches land every week. Different from an open source project, in which dependent projects are unknown, within the SoundCloud organization, we can draw a transparent view on all callers to JVMKit’s APIs to see which apps are lagging behind and which are on the straight cutting edge.

We then ask ourselves: How exactly can we make sure the entire company follows JVMKit’s release cycle? As a platform team and developers of JVMKit, we want to minimize the disruptions to each team’s roadmap, onboarding into new APIs, replacement of deprecated calls, etc. In other words, we want to minimize the work we put on our colleagues’ plates so they can focus on higher objectives. For that, we have built automation to perform batch upgrades across all of our repositories — ideally, JVMKit upgrades are completely transparent.

Reverse Dependency Graph

To identify which services depend on JVMKit, we rely on these relationships being explicitly defined in terms of code. As part of the previously introduced concept of the Golden Path for backend development, we do that through the build scripts that are part of each repository. For the majority of our backend repositories, this means sbt builds. The same idea is applied to our other Golden Paths, with Gradle on Android and npm for web development, for example:

val jvmkitVersion = "15.0.0"

lazy val root = (project in file("."))

.settings(

libraryDependencies ++= Seq(

"com.soundcloud" %% "jvmkit-admin-server" % jvmkitVersion,

"com.soundcloud" %% "jvmkit-json-play" % jvmkitVersion,

...

)

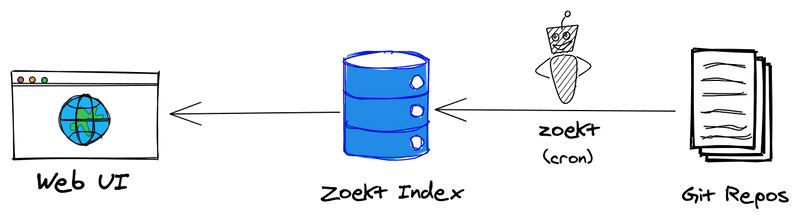

)Every five minutes, changes to the default branch of each of SoundCloud’s repositories hosted on GitHub are indexed by Zoekt, a search engine based on regular expression matching (read this article for more in-depth information). Out of the box, Zoekt provides a web interface that we then make available to all SoundCloud engineers, but we also added the ability to query its results through a simple scripting API.

By combining Zoekt’s API and the standardization across the company, we can write a simple query like "jvmkit" f:^build.sbt and list all the projects that depend on JVMKit — in other words, we can build a level of the reverse dependency graph in which JVMKit is the root.

Verification

With these tools at hand, we can now start thinking about how we can automatically verify whether a change in JVMKit will integrate smoothly with other projects. For the sake of the example, let’s take an arbitrary service called likes, which is responsible for managing which tracks each user liked on SoundCloud. If we were to write such an algorithm in pseudocode, this is how it would look:

1. Clone "likes" repository

2. Find build.sbt

3. Replace the jvmkitVersion value with the latest snapshot

4. Compile "likes"If all steps in the algorithm succeed, we guarantee that the new changes in JVMKit didn’t cause issues with likes. We can generalize this idea and run the algorithm for each and every repository we previously identified as reverse dependencies of JVMKit — if all of them succeed, the new JVMKit version is good to go!

Our work is done, right? Not really. There are other cases that our algorithm currently doesn’t consider. For instance, what happens if we were planning to release a JVMKit version and coincidentally on that day, the main branch of likes was broken? We shouldn’t consider it the fault of that JVMKit version’s bump. To isolate that scenario, we can compile the target project with no changes whatsoever.

If JVMKit’s new version introduces an API-breaking change — requiring, thus, a major version bump — we allow for the engineers running the upgrade to automatically fix the breaking change by executing a set of processors directly in the repositories source code with tools like sed, awk, lint, etc.

Now, when the release is a minor or patch change, surely the project will compile, but does it behave as we expect it to? We should also run automated tests to ensure our business logic is still respected. Once again, here we can leverage the standardization provided by the Golden Path: All Scala projects that are built with sbt will execute their tests with the same command: sbt test.

Finally, the pseudocode presented earlier can be extended to become:

1. Clone "likes" repository

2. Compile "likes"

3. Run tests on "likes"

4. Find build.sbt

5. Replace the jvmkitVersion value with the latest snapshot

6. Run source code processors for automatic API fixing

7. Compile "likes"

8. Run tests on "likes"With this, it becomes clearer who the culprit for a failure is, depending upon which step of this sequence the algorithm stops. We’re also more confident of a smooth integration if it completes successfully.

Taking It a (Pipeline) Step Further

Instead of going through the described process manually and in an ad hoc fashion every time we decide to release a new build of JVMKit, we can develop the automation even further and leverage a continuous integration system to perform the checks for every single commit in the library repository.

As mentioned earlier, at SoundCloud, we have hundreds of projects that rely on JVMKit, which makes compiling and running their tests a time-consuming task. To speed up JVMKit’s development and feedback loop, we decided to split the automation into two:

- A nightly, long-running pipeline that verifies the new JVMKit against all projects in the organization

- A faster pipeline, limited to a small allowlist of projects, that runs on every pull request

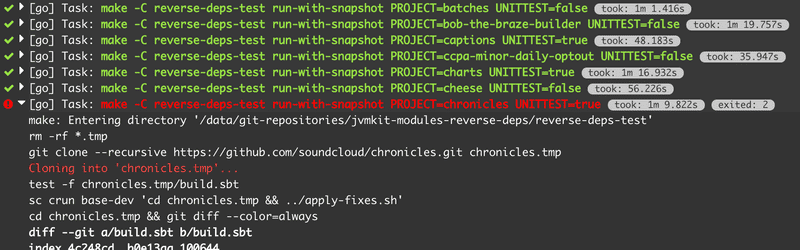

This is achieved through scripts that fetch project lists from Zoekt and translate them into pipeline descriptors that can be ingested by our CD system. The generated pipelines include steps to run tests, depending on whether these exist on each of the target projects.

On top of that, in the real world, not all projects are well behaved. There are different reasons we might want to skip specific steps of the verification algorithm: when the test suite takes too long, if it’s known to be flaky, or when the project needs a specific environment configuration or special dependencies that aren’t provided out of the box. For these cases, we also introduced a denylist that serves as input to the CI pipeline generator script.

Summing It All Up

Observing the running pipelines is an engaging activity. Every day, we can have a view on how our projects will behave when moving forward, and when failures occur, it’s also easy to pinpoint reasons: If a single project failed due to an API change, we can dive into possible shortcomings of its implementations or uses of JVMKit’s APIs that weren’t originally intended. If many (or all) projects fail, then it’s obvious that the issue is with JVMKit itself, and we can quickly act on providing fixes.

We’ve reached an interesting point in our development practices for JVMKit after years of investment: With good tooling and automation in every step of the process, we’re confident when releasing a change to the library that powers dozens of teams, hundreds of systems, thousands of instances, and millions of users. We’re fearless about releasing JVMKit more often than ever with no impact or disruption to our team’s planned roadmap, while also ensuring technological standardization across SoundCloud.