SoundCloud Is Playing the Oboe

Media and playback are at the core of SoundCloud’s experience. For that reason, we have established and grown an engineering team that is specialized in providing the best possible streaming experience to our users across multiple platforms.

To do this, we combine the industry’s best-fitting solutions with our own custom technologies, libraries, and tools. In this article, let’s dive into how we improved latency in our Android application by leveraging a new engine for our player’s audio sink.

Maestro powers our web applications, but for the ones that are compiled with native code, we created Flipper, a lightweight cross-platform audio streaming engine written in C++. Flipper drives tens of millions of plays per day in our Android and iOS mobile apps, and it is designed with SoundCloud’s use cases in mind, which provides the flexibility we need for innovation and fast delivery.

Flipper

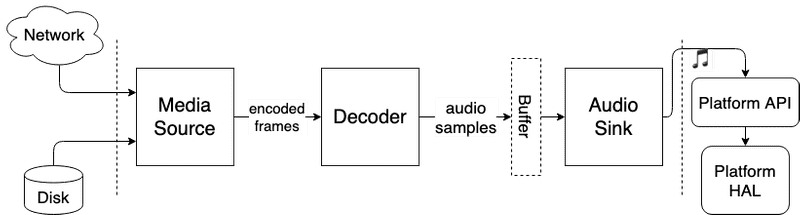

As is the case with many other media players, Flipper is designed as a pipeline of components, each of which is responsible for one step of the streaming technology. Having multiple discrete steps — or stages — allows for parallel processing of a continuously flowing stream of data. Think of it like a car manufacturing plant: While one group of workers is focused on constructing the engine of the car, someone else is assembling the tires.

For the scope of this article, let’s focus on one single component: the sink. This is the last stage in the pipeline, and the sink’s responsibility is to offload the decoded audio samples to the underlying hardware abstraction layer (HAL) of the operating system in which the application is running. It is critical for this to happen in a timely manner, so as to avoid unwanted audio artifacts (crackling or popping).

Having the sink as a component abstraction is very useful when building Flipper, since it enables portability of the code. As a result, we can unify the exposed API to the rest of the stages in the pipeline, regardless of the specific platform it runs on. On iOS, these specificities are in Core Audio’s APIs, while on Android, the samples are processed with other bindings.

Android Audio APIs

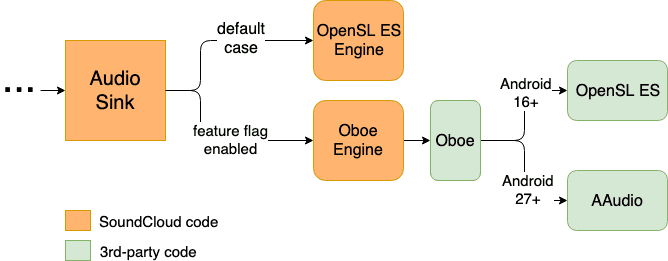

The Android OS has evolved over the years, and along with it, so has its audio functionality. As a result, there are now a few sets of audio APIs. In Android Gingerbread, the Open Sound Library for Embedded Systems (OpenSL ES), a C library built with portability (and mobile) in mind, graduated to be the recommended interface to the audio hardware. Both the application-level player (MediaPlayer) and third-party developers have access to OpenSL ES APIs via the Android NDK.

Even though OpenSL was able to support Android’s use cases for a considerable amount of time, it started showing signs of being outdated as applications evolved to require higher performance. Beginning with Android Oreo, however, Google integrated a new C library with a simpler API surface to target these low-latency scenarios: AAudio.

Since then, Google has been advising developers to use AAudio for building audio applications, but the API availability becomes an issue when targeting a large audience of users, as fragmentation becomes more evident. Developers still have to maintain code for OpenSL ES bindings if they want to provide audio capabilities on older versions of the operating system. On top of that, manufacturers also apply changes to the behavior of the APIs, deviating from the official documentation Google provides and forcing all sorts of workarounds and device-specific hacks.

Enter Oboe, a C++ wrapper built on top of OpenSL ES and AAudio, that exposes an “AAudio-like” interface, includes fixes (or workarounds) for known manufacturer-specific issues, and automatically picks the most suitable audio API based on a device’s capabilities. If you’re already familiar with Android development, this is the same idea behind the Support Libraries, in that it provides a compatibility layer between the application and platform code.

SoundCloud and Oboe

The cost of maintaining two implementations for our Flipper sink on Android and the risk of replacing a critical part of our already battle-tested core library for playback were among the reasons we postponed the integration of our player with AAudio, but the release, and later, stable graduation, of Oboe motivated the work on a prototype integration.

Following our usual rollout process, we started by deploying Oboe to a controlled subgroup of our userbase by using a remote feature toggle, and as we got more confident with the performance in production, we extended its availability.

It’s been a few months since we began measuring Oboe’s integration with Flipper in production, and while it is still a rather new tool, it has proven to be beneficial to us in many ways.

For one, the global community united around improving Oboe introduces new concepts and capabilities faster than what we could do internally. It also provides us with access to the experts behind the platform APIs, along with the possibility to contribute back to the upstream repository, due to its open-source nature. Finally, the combination of Oboe and AAudio gives us richer insights into the system’s HAL and its performance — for example, the number of buffer underruns, which allows for additional optimizations.

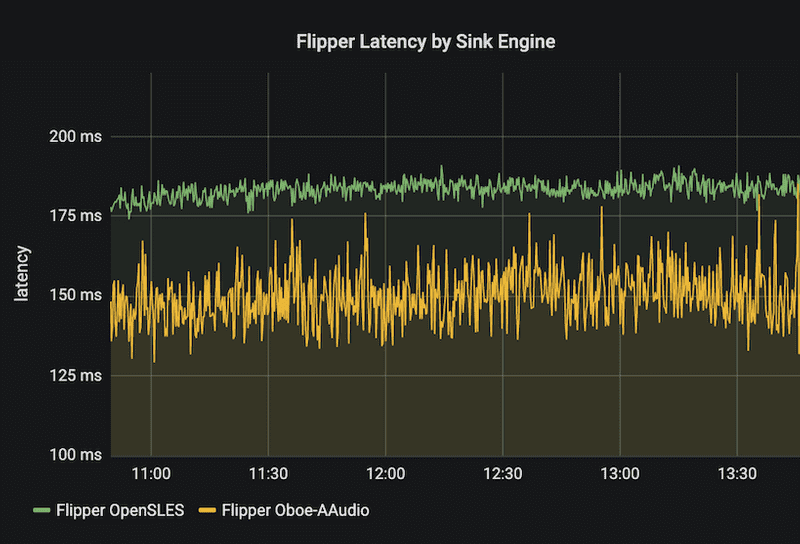

Most importantly, our users also gained: We have been able to provide a better experience for playback, latency-wise, as our telemetry systems show that AAudio contributed to an approximate 10 percent decrease in overall latency in comparison to the same sampling size with OpenSL ES.

All in all, we are happy with the results of what started as a prototype implementation, and we’re aiming to make the new audio sink available to our entire userbase in the near future, while still keeping an eye open for further improvements to latency and the playback experience in general.

If you’re interested in media streaming, Android, large-scale challenges, and what we do at SoundCloud, make sure to check out our jobs page!